University Steps up Data Science

Data is a booming business. From medicine and economics to environmental and political science, more and more data is being generated in just about every area of science and society. The exponential increase in data generation is driving the social and scientific importance of data science, the discipline that forms the basis of analyzing data to generate new knowledge and applications. To step up its research and teaching in this field, the University of Zurich has established the Department of Mathematical Modeling and Machine Learning (DM3L) at the Faculty of Science.

“The new department combines mathematical research with subject-specific applications of data science,” explains Roland Sigel, Dean of the Faculty of Science. Reinhard Furrer, professor of applied statistics and interim head of the newly founded department, underscores the preeminent importance of mathematics: “It’s only thanks to the discipline of mathematics that it’s been possible to develop new tools in data science such as machine learning and deep learning, and mathematics is also crucial to their ongoing development.”

The new department combines mathematical research with subject-specific applications of data science.

DM3L formally commenced operations in January 2024. It currently comprises four professorships in network science (Alexandre Bovet), risk analysis (Delia Coculescu), statistics (Reinhard Furrer) and deep learning (Jan Dirk Wegner), with more on the cards. The department’s first official scientific publication, an application-oriented paper on automated fact-checking, has just been released.

Automated fact-checking

This work, under the direction of Alexandre Bovet, starts by asking to what extent large language models (LLMs) such as OpenAI’s GPT-3.5 and GPT-4 can identify false information in media of all kinds. The two researchers presented the models with various statements and asked them to rate them as true or false. Some of their questions were based on verified statements from politicians or factual questions related to economics and politics. In other cases, they presented the language models with ambiguous statements. In the first case, the success rate was astonishingly good: with an accuracy of 89 percent, the LLM GPT-4 was able to correctly evaluate unambiguous statements when it was simultaneously able to perform contextual internet searches via Google. The result for the ambiguous statements was significantly worse. Overall, GPT-4 exceeded the accuracy of GPT-3.5. The results also depended on the language used.

The authors conclude that the language models have great potential when it comes to checking content on websites or other media, for example, and supporting human fact-checking. “But the systems aren’t one hundred percent trustworthy, which is why a human should still be involved in fact-checking,” says Alexandre Bovet.

Analyzing major risks

The work on large language models sheds light on artificial neural networks, which form the basis of machine learning and deep learning. One focus of the newly established department is developing new machine learning methods to recognize structures in large data sets and generate hypotheses, for example in the context of analyzing major new risks, Delia Coculescu’s field of work. The specialist in quantitative risk analysis mentions the unprecedented threats to globalized economies such as cyber-attacks, supply chain problems and health crises such as pandemics, adding climate change as an exacerbating factor. She points out that the complexity of these threats exceeds the capabilities of current analytical methods. “One of the main goals of our research is to develop innovative methods for assessing and mitigating non-standardized risks,” says Coculescu.

In collaboration with the Departments of Geography and Finance at UZH, her team is developing a model based on machine learning that combines economic variables with data on biodiversity loss and temperature anomalies. “Neural networks are particularly efficient in contexts where complex optimization problems arise,” she explains. Once completed, the project will facilitate international efforts to combat climate change and species loss. The risk researcher’s undertaking demonstrates the importance of interdisciplinary collaboration at the department. “Data science transcends disciplinary boundaries and is an element that unifies both the institutes of the Faculty of Science and the university as a whole,” says Dean Roland Sigel. This interdisciplinary collaboration is also illustrated by another new piece of work from the department.

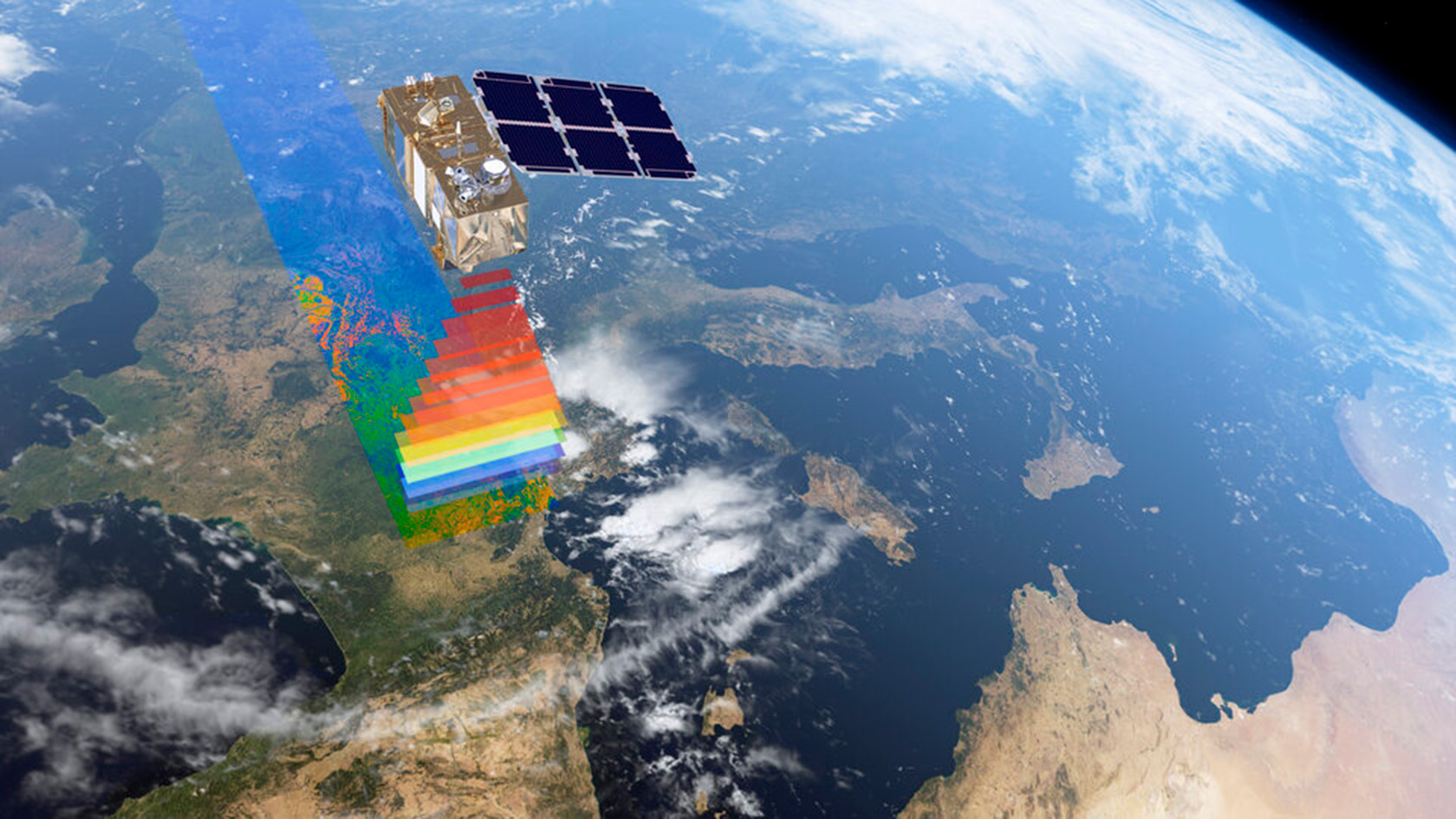

Satellite data and neural networks

In this project run by Jan Wegner, one of the department’s founding professors, an artificial neural network was used to create a high-resolution map of cocoa plantations in Ghana and Cote d’Ivoire. The researchers trained the deep learning network to recognize cocoa plantations in satellite images of vegetation. The research team then worked with people in Africa to check the map on the ground. Thanks to a combination of remote sensing with satellites and deep learning, a precise map has been created that for the first time shows in detail where cocoa is being grown legally and illegally. The background to the work is that counter to sustainable cultivation practices, cocoa plantations are expanding into protected forest areas. According to the maps, illegal cocoa cultivation in Cote d’Ivoire has eaten up over a third of protected forest areas; in Ghana the figure is lower but still 13 percent. “These groundbreaking maps are a decisive step toward promoting nature conservation and sustainable development in the regions studied,” says Jan Wegner.

Historical forest analyses

The staff of the newly created department often work at the interface of pure mathematical research to enable new applications in other disciplines. Roman Flury, for example, working under the direction of Reinhard Furrer, has developed a statistical method to identify spatial scales and dominant features in data sets. Thanks to this work, it was possible to use old forest inventory data from the 1920s in Finland to determine the basal areas of the most common Finnish trees such as pine, spruce, birch, and other native deciduous species. This made it possible to retrospectively differentiate anthropogenic from site-related, natural influences on the distribution of tree species. “Before this, it wasn’t possible to do this kind of historical analysis of forest ecology,” explains Reinhard Furrer.

It’s only thanks to the discipline of mathematics that it’s been possible to develop new tools in data science such as machine learning and deep learning.

Stepping up teaching

These examples illustrate the way the department is applying data science in various specialist disciplines in combination with application-oriented pure research. “We’re contributing both to the further development of data science and to solving societal challenges,” says Dean Roland Sigel. Alongside research, teaching is also being stepped up, with the Applied Mathematics and Machine Learning study program being offered at Bachelor’s level from fall 2025.